Scaling up An Existing Application with Lambda Functions

What is Serverless?

Serverless infrastructure is the latest buzzword in tech, but from the name itself, it’s not clear what it means. At its core, serverless is the next logical step in infrastructure service providers abstracting infrastructure away from developers so that when you deploy your code, “it just works.”

Serverless doesn’t mean that your code is not running on a server. Rather, it means that you don’t have to worry about what server it’s running on, whether that server has adequate resources to run your code, or if you have to add or remove servers to properly scale your implementation. In addition to abstracting away the specifics of infrastructure, serverless lets you pay only for the time your code is explicitly running (in 100ms increments).

AWS-specific Serverless: Lambda

Lambda, Amazon Web Service’s serverless offering, provides all these benefits, along with the ability to trigger a Lambda function with a wide variety of AWS services.` Natively, Lambda functions can be written in:

- Java

- Go

- PowerShell

- Node.js

- C#

- Python

However, as of November 29th, AWS announced that it’s now possible to use the Runtime API to add any language to that list. Adapters for Erlang, Elixir, Cobol, N|Solid, and PHP are currently in development as of this writing.

What does it cost?

The Free Tier (which does not expire after the 12-month window of some other free tiers) allows up to 1 million requests per month, with the price increasing to $0.20 per million requests thereafter. The free tier also includes 400,000 GB-seconds of compute time.

The rate at which this compute time will be used up depends on how much memory you allocate to your Lambda function on its creation. Any way you look at it, many workflows can operate in the free tier for the entire life of the application, which makes this a very attractive option to add capacity and remove bottlenecks in existing software, as well as quickly spin up new offerings.

What does a Lambda workflow look like?

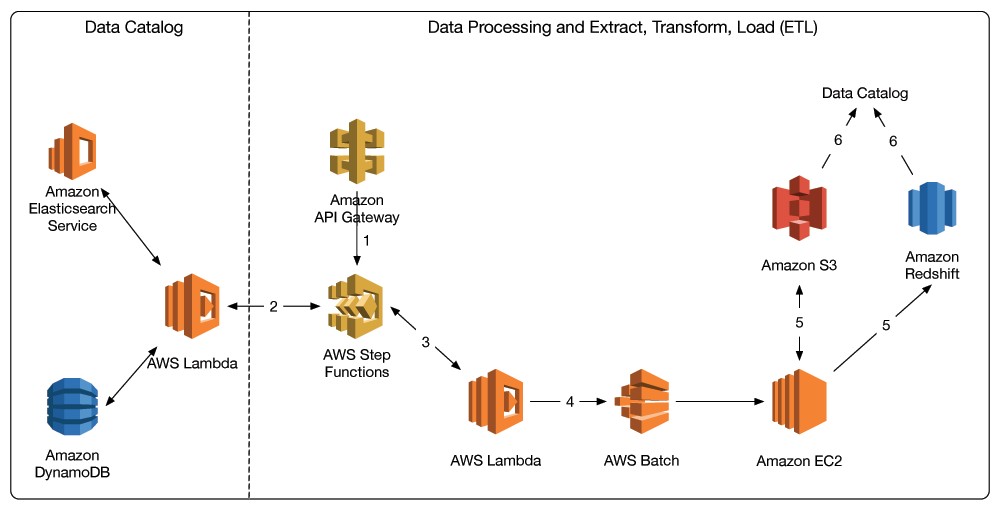

Using the various triggers that AWS provides, Lambda offers the promise of never having to provision a server again. This promise, while technically possible, is only fulfilled for various workflows and with complex stringing together of various services.

However, if you have an existing application and don’t want to rebuild your entire infrastructure on AWS services, can you still integrate serverless into your infrastructure?

Yes.

At its most simple implementation, AWS Lambda functions can be the compute behind a single API endpoint. This means rather than dealing with the complex dependency graph we see above; existing code can be abstracted into Lambda functions behind an API endpoint, which can be called in your existing code. This approach allows you to take codepaths that are currently bottlenecks and put them on infrastructure that can run in parallel as well as scale infinitely.

Creating an entire serverless infrastructure can seem daunting. Instead of exploring how you can create an entire serverless infrastructure all at once, we will look at how to eliminate bottlenecks in an existing application using the power that serverless provides.

Case Study: Resolving a Bottleneck With Serverless

In our hypothetical application, users can set up alerts to receive emails when a certain combination of API responses all return true. These various APIs are queried hourly to determine whether alerts need to be sent out. This application is built as a traditional monolithic application, with all the logic and execution happening on a single server.

Important to note is that the application itself doesn’t care about the results of the alerts. It simply needs to dispatch them, and if the various API responses match the specified conditions, the user needs to get an email alert.

What is the Bottleneck?

In this case, as we add more alerts, processing the entire collection of alerts starts to take more and more time. Eventually, we will reach a point where the checking of all the alerts will overrun into the next hour, thus ensuring the application is perpetually behind in checking a user’s alerts.

This is a prime example of a piece of functionality that can be moved to a serverless function, because the application doesn’t care about the result of the serverless function, we can dispatch all of our alert calls asynchronously and take advantage of the auto-scaling and parallelization of AWS Lambda to ensure all of our events are processed in a fraction of the time.

Refactoring the checking of alerts into a Lambda function takes this bottleneck out of our codebase and turns it into an API call. However, there are now a few caveats that we have to resolve if we want to run this as efficiently as possible.

Caveat: Calling an API-invoked Lambda Function Asynchronously

If you’re building this application in a language or library that’s synchronous by default, turning these checks into API calls will just result in API calls each waiting for the previous to finish. While it’s possible you’ll receive a speed boost because your Lambda function is set up to be more powerful than your existing server, you’ll eventually run into a similar problem as we had before.

As we’ve already discussed, all our application needs to care about is that the call was received by API Gateway and Lambda, not whether it finished or what the result of the alert was. This means, if we can get API Gateway to return as soon as it receives the request from our application, we can run through all these requests in our application much more quickly.

In their documentation for integrating API Gateway and Lambda, AWS has provided documentation on how to do just this:

To support asynchronous invocation of the Lambda function, you must explicitly add the X-Amz-Invocation-Type:Event header to the integration request.

This will make the loop in our application that dispatches the requests run much faster and allow our alert checks to be parallelized as much as possible.

Caveat: Monitoring

Now that your application is no longer responsible for ensuring these API calls complete successfully, failing APIs will no longer trigger any monitoring you have in place.

Out of the box, Lambda supports Cloudwatch monitoring where you can check if there were any errors in the function execution. You can also set up Cloudwatch to monitor API Gateway as well. If the existing metrics don’t fit your needs, you can always set up custom metrics in Cloudwatch to ensure you’re monitoring everything that makes sense for your application.

By integrating Cloudwatch into your existing monitoring solution, you can ensure your serverless functions are firing properly and always available.

Tools for Getting Started

One of the most significant barriers to entry for serverless has traditionally been the lack of tools for local development and the fact that the infrastructure environment is a bit of a black box.

Luckily, AWS has built SAM (Serverless Application Model) CLI, which can be run locally inside Docker to give you a simulated serverless environment. Once you get the project installed and API Gateway running locally, you can hit the API endpoint from your application and see how your serverless function performs.

This allows you to test your new function’s integration with your application and iron out any bugs before you go through the process of setting up API Gateway on AWS and deploying your function.

Serverless: Up and Running

Once you go through the process of creating a serverless function and getting it up and running, you’ll see just how quickly you can remove bottlenecks from your existing application by leaning on AWS infrastructure.

Serverless isn’t something you have to adopt across your entire stack or adopt all at once. By shifting to a serverless application model piece by piece, you can avoid overcomplicating your workflow while still taking advantage of everything this exciting new technology has to offer.

About the Author

Keanan Koppenhaver @kkoppenhaver, is CTO at Alpha Particle, a digital consultancy that helps plan and execute digital projects that serve anywhere from a few users a month to a few million. He enjoys helping clients build out their developer teams, modernize legacy tech stacks, and better position themselves as technology continues to move forward. He believes that more technology isn’t always the answer, but when it is, it’s important to get it right.

About the Editor

Jennifer Davis is a Senior Cloud Advocate at Microsoft. Jennifer is the coauthor of Effective DevOps. Previously, she was a principal site reliability engineer at RealSelf, developed cookbooks to simplify building and managing infrastructure at Chef, and built reliable service platforms at Yahoo. She is a core organizer of devopsdays and organizes the Silicon Valley event. She is the founder of CoffeeOps. She has spoken and written about DevOps, Operations, Monitoring, and Automation.